This Cognitive Bias kills our ability to thrive in complexity

Does this sound familiar?

I was a Program Manager for over a decade, during which time I must have facilitated dozens of “project post-mortems”, a term that always bothered me, since in none of those projects had anyone died. One of the key “Lessons Learned” from nearly every post-mortem I facilitated was some variation on this: “We Should Have Planned Better”.

Of course, we always did the best planning we could, given what we knew at the time. Software development, like many types of work, is inherently unpredictable. We always learned quite a bit more as we went along building things, testing them, and reviewing them with customers and stakeholders. In all of those post-mortems, we learned the wrong lesson. We fell prey to a cognitive bias known as Hindsight Bias.

Hindsight Bias

Also known as the knew-it-all-along effect, Hindsight Bias is the inclination, after an event has occurred, to see the event as having been predictable, despite there having been little or no objective basis for predicting it.

Some common examples of this bias include:

- I knew that stock was going to rise.

- Of course my team came back to win the game at the end–they always do.

- I told everyone that candidate x would be elected, and I was right.

Of course, if people actually knew all of these things, they would have made a fortune by now, either through playing the stock market or gambling on their team (or candidate).

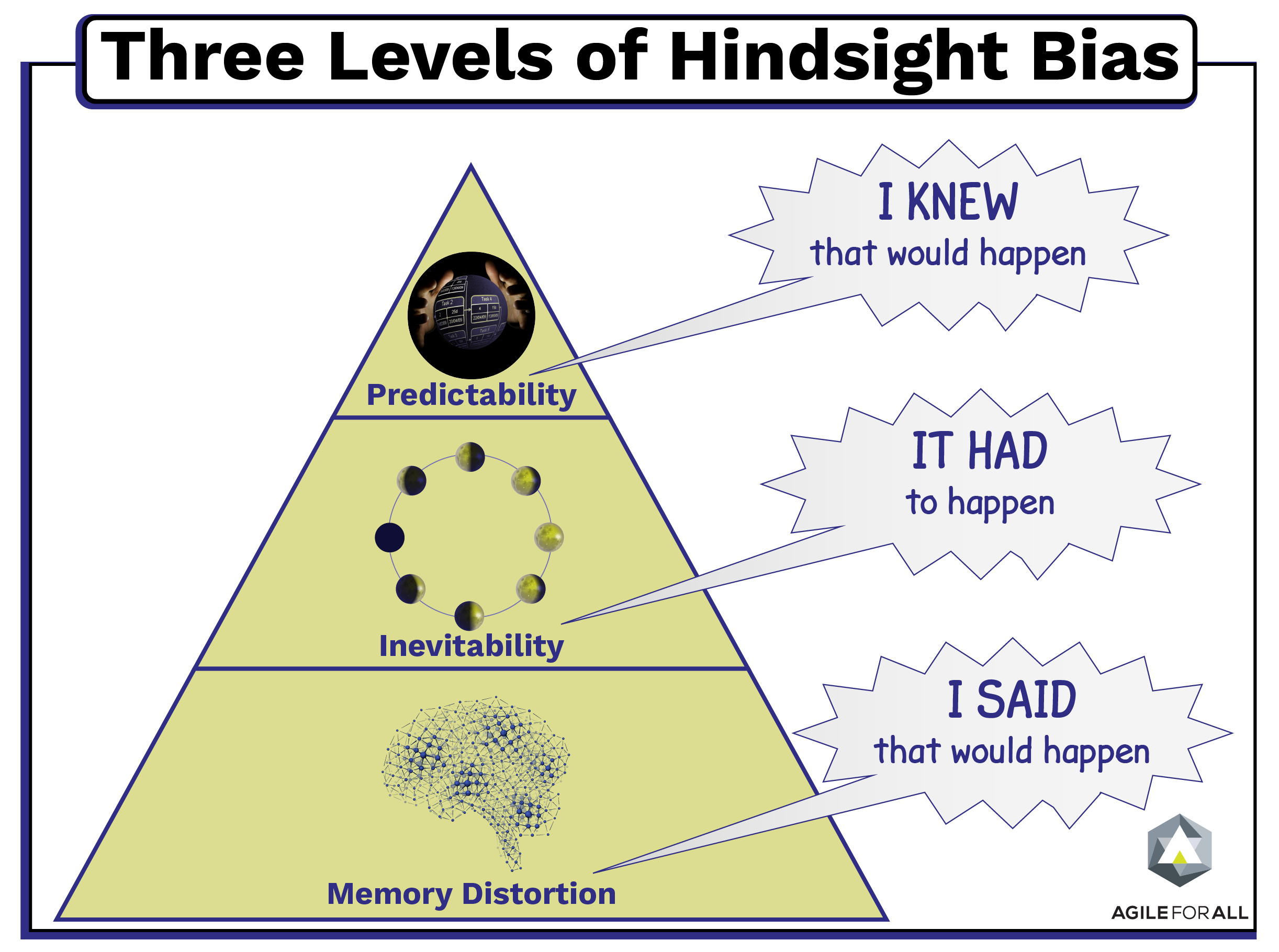

In an article published in the September 2012 issue of Perspectives on Psychological Science, Neal Roese of the Kellogg School of Management at Northwestern University and Kathleen Vohs of the Carlson School of Management at the University of Minnesota review the existing research on hindsight bias. The authors break this bias into three levels that stack on top of each other:

The first level, Memory Distortion, involves misremembering an earlier opinion or judgment (“I said it would happen”).

The second level, Inevitability, centers on our belief that the event was inevitable (“It had to happen”).

The third level, Predictability, involves the belief that we personally could have foreseen the event (“I knew it would happen”).

Impact on Complexity

Complex work is by definition unpredictable. But Hindsight Bias tricks our brains into believing that past events were more predictable than they were. Unmitigated, Hindsight Bias leads us to treat complex work as predictable. Instead of using empirical processes based on transparency and frequent inspection and adaptation loops, we do extensive up-front planning and implement stricter controls to meet the original plan. Years of “lessons learned” sessions caused us to move further and further fromt he right approach.

One of the tricky things about cognitive biases is that they’re hard-wired into our brains – we cannot overcome them. Awareness of Hindsight Bias doesn’t mean you are no longer affected by it. In Daniel Kahneman‘s seminal book “Thinking Fast and Slow”, he describes two thinking systems within our brain. System one is the fast, pattern matching part of our brain where our cognitive biases live. It runs the show, due to the evolutionary need to conserve energy. System two is the slow, logical, part of our brain, the part of our brain that we normally are thinking about when we say that we are “thinking”. The catch is that it only kicks in when system one doesn’t recognize a pattern, and essentially says “hey, System Two, take a look at this”. We are not as logical as we think.

To mitigate any cognitive bias, we need to build habits and processes that intentionally slow us down and ask System Two to take over for a bit. Using the language of experimentation is one way we can engage this part of our brain. Instead of stating a “requirement” (we KNOW we need this), we state hypotheses (We believe our users want this, and we’ll know we’re right/wrong when______________).

Working with processes that have regular feedback loops is another. The Sprint Review Meeting in Scrum is intended to be a pause where we engage System Two and say “does this thing that we built actually meet the need we thought it would? If not, what did we learn and how does that influence what we should build next?”

These are the types of approaches that are appropriate for complex work. They allow us to mitigate our Hindsight Bias.

No, we probably couldn’t have planned better – instead, we should have planned to use a more empirical approach to the work, and stopped punishing people for not being able to predict the unpredictable.

Where have you seen Hindsight Bias show up in your work? How have you been successful at mitigating it? Comment below to let us know.

“…in none of those projects had anyone died.” Probably not literally, but, based on such sessions I used to attend, people’s spirits had died, at least somewhat. And, enough of these having happened, probably a lot more spirit had been killed.

“…see the event as having been predictable.” Usually this opinion comes from those who did not participate in the actual work, i.e., “You people should have anticipated this.” Sort of ‘Hindsight Foresight’ at work.

Oh yes, most definitely. AND, I’ve seen people’s relationships with partners die or at best get put on life support, people get very sick, etc.

So true, though even participants often get caught in the trap of thinking things could have been planned better.